Publications

Capturing longitudinal change in cerebellar ataxia: Context-sensitive analysis of real-life walking increases patient relevance and effect size

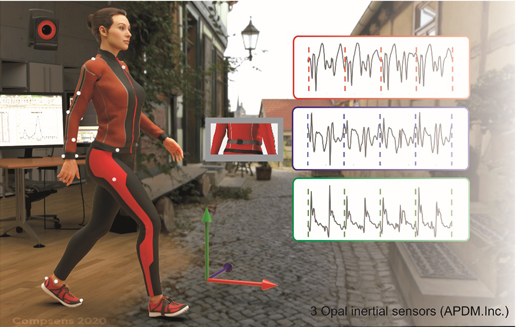

OBJECTIVES: With disease-modifying drugs for degenerative ataxias on the horizon, ecologically valid measures of motor performance that can detect patient-relevant changes in short, trial-like time frames are highly warranted. In this 2-year longitudinal study, we aimed to unravel and evaluate measures of ataxic gait which are sensitive to longitudinal changes in patients{\textquoteright} real life by using wearable sensors. METHODS: We assessed longitudinal gait changes of 26 participants with degenerative cerebellar disease (SARA:9.4{\textpm}4.1) at baseline, 1-year and 2-year follow-up assessment using 3 body-worn inertial sensors in two conditions: (1) laboratory-based walking (LBW); (2) real-life walking (RLW) during everyday living. In the RLW condition, a context-sensitive analysis was performed by selecting comparable walking bouts according to macroscopic gait characteristics, namely bout length and number of turns within a two-minute time interval. Movement analysis focussed on measures of spatio-temporal variability, in particular stride length variability, lateral step deviation, and a compound measure of spatial variability (SPCmp). RESULTS: Gait variability measures showed high test-retest reliability in both walking conditions (ICC \> 0.82). Cross-sectional analyses revealed high correlations of gait measures with ataxia severity (SARA, effect size ρ >= 0.75); and in particular with patients{\textquoteright} subjective balance confidence (ABC score, ρ>=0.71), here achieving higher effect sizes for real-life than lab-based gait measures (e.g. SPCmp: RLW ρ=0.81 vs LBW ρ=0.71). While the clinician-reported outcome SARA showed longitudinal changes only after two years, the gait measure SPCmp revealed changes already after one year with high effect size (rprb=0.80). In the subgroup with spinocerebellar ataxia type 1, 2 or 3 (SCA1/2/3), the effect size was even higher (rprb=0.86). Based on these effect sizes, sample size estimation for the gait measure SPCmp showed a required cohort size of n=42 participants (n=38 for SCA1/2/3 subgroup) for detecting a 50\% reduction of natural progression after one year by a hypothetical intervention, compared to n=254 for the SARA. CONCLUSIONS: Gait variability measures revealed high reliability and sensitivity to longitudinal change in both laboratory-based constrained walking as well as in real-life walking. Due to their ecological validity and larger effect sizes, characteristics of real-life gait recordings are promising motor performance measures as outcomes for future treatment trials.Competing Interest StatementDr Ilg received consultancy honoraria by Ionis Pharmaceuticals, unrelated to the present work. Mr Seemann reports no disclosures. Mrs Beyme reports no disclosures. Mrs John reports no disclosures. Mr Harmuth reports no disclosures. Prof Giese reports no disclosures. Prof Schoels served as advisor for Alexion, Novartis and Vico. He participates as a principal investigator in clinical studies sponsored by Vigil Neuroscience (VGL101-01.001; VGL101-01.002), Vico Therapeutics (VO659-CT01), PTC Therapeutics (PTC743-NEU-003-FA) and Stealth BioTherapeutics (SPIMD-301), all unrelated to the present work. Prof Timmann reports no disclosures. Prof Synofzik has received consultancy honoraria from Ionis, UCB, Prevail, Orphazyme, Biogen, Servier, Reata, GenOrph, AviadoBio, Biohaven, Zevra, Lilly, and Solaxa, all unrelated to the present manuscript. Funding StatementThis work was supported by the International Max Planck Research School for Intelligent Systems (IMPRS-IS) (to J.S.) and the Else Kroener-Fresenius-Stiftung Medical Scientist programme ClinbrAIn (to W.I. and M.G.). as well as the Else Kroener-Fresenius Stiftung Clinician Scientist program PRECISE.net (to M.S.). In addition, this work was supported by the European Union, project European Rare Disease Research Alliance (ERDERA, $\#$ 101156595) (to M.S.).Author DeclarationsI confirm all relevant ethical guidelines have been followed, and any necessary IRB and/or ethics committee approvals have been obtained.YesThe details of the IRB/oversight body that provided approval or exemption for the research described are given below:Ethics committee/IRB of University Tuebingen, Germany gave ethical approval for this workI confirm that all necessary patient/participant consent has been obtained and the appropriate institutional forms have been archived, and that any patient/participant/sample identifiers included were not known to anyone (e.g., hospital staff, patients or participants themselves) outside the research group so cannot be used to identify individuals.YesI understand that all clinical trials and any other prospective interventional studies must be registered with an ICMJE-approved registry, such as ClinicalTrials.gov. I confirm that any such study reported in the manuscript has been registered and the trial registration ID is provided (note: if posting a prospective study registered retrospectively, please provide a statement in the trial ID field explaining why the study was not registered in advance).Yes I have followed all appropriate research reporting guidelines, such as any relevant EQUATOR Network research reporting checklist(s) and other pertinent material, if applicable.YesData will be made available upon reasonable request. The authors confirm that the data supporting the findings of this study are available within the article and its Supplementary material. Raw data regarding human participants (e.g. clinical data) are not shared freely to protect the privacy of the human participants involved in this study; no consent for open sharing has been obtained.

Reduced Age-Dependent Penetrance of a Large FGF14 GAA Repeat Expansion in a 74-Year-Old Woman from a German Family with SCA27BD

Towards patient-relevant, trial-ready digital motor outcomes for SPG7: a cross-sectional prospective multi-center study (PROSPAX)

Background and Objectives With targeted treatment trials on the horizon, identification of sensitive and valid outcome measures becomes a priority for the >100 spastic ataxias. Digital-motor measures, assessed by wearable sensors, are prime outcome candidates for SPG7 and other spastic ataxias. We here aimed to identify candidate digital-motor outcomes for SPG7 – as one of the most common spastic ataxias – that: (i) reflect patient-relevant health aspects, even in mild, trial-relevant disease stages; (ii) are suitable for a multi-center setting; and (iii) assess mobility also during uninstructed walking simulating real-life.

Digital gait measures capture 1-year progression in early-stage spinocerebellar ataxia type 2

BACKGROUND With disease-modifying drugs in reach for cerebellar ataxias, fine-grained digital health measures are highly warranted to complement clinical and patient-reported outcome measures in upcoming treatment trials and treatment monitoring. These measures need to demonstrate sensitivity to capture change, in particular in the early stages of the disease.OBJECTIVE To unravel gait measures sensitive to longitudinal change in the - particularly trial-relevant- early stage of spinocerebellar ataxia type 2 (SCA2).METHODS Multi-center longitudinal study with combined cross-sectional and 1-year interval longitudinal analysis in early-stage SCA2 participants (n=23, including 9 pre-ataxic expansion carriers; median ATXN2 CAG repeat expansion 38{\textpm}2; median SARA [Scale for the Assessment and Rating of Ataxia] score 4.83{\textpm}4.31). Gait was assessed using three wearable motion sensors during a 2-minute walk, with analyses focusing on gait measures of spatiotemporal variability shown sensitive to ataxia severity, e.g. lateral step deviation.RESULTS We found significant changes for gait measures between baseline and 1-year follow-up with large effect sizes (lateral step deviation p=0.0001, effect size rprb=0.78), whereas the SARA score showed no change (p=0.67). Sample size estimation indicates a required cohort size of n=43 to detect a 50\% reduction in natural progression. Test-retest reliability and Minimal Detectable Change analysis confirm the accuracy of detecting 50\% of the identified 1-year change.CONCLUSIONS Gait measures assessed by wearable sensors can capture natural progression in early-stage SCA2 within just one year {\textendash} in contrast to a clinical ataxia outcome. Lateral step deviation thus represents a promising outcome measure for upcoming multi-centre interventional trials, particularly in the early stages of cerebellar ataxia.Competing Interest StatementJ. Seemann, L. Daghsen, M. Cazier, J. Lamy, ML. Welter, A. Giese, and G. Coarelli report no disclosures. Prof. Durr serves as an advisor to Critical Path Ataxia Therapeutics Consortium and her institution (Paris Brain institute) receives her consulting fees from Pfizer, Huntix, UCB, Reata, PTC Therapeutics as well as research grants from the NIH, Biogen, Servier, and the National Clinical Research Program and she holds partly a Patent B 06291873.5 on Anaplerotic Therapy of Huntington{\textquoteright}s Disease and other polyglutamine diseases (2006). Prof. Synofzik has received consultancy honoraria from Ionis, UCB, Prevail, Orphazyme, Servier, Reata, GenOrph, AviadoBio, Biohaven, Zevra, and Lilly, all unrelated to the present manuscript. Dr. Ilg received consultancy honoraria by Ionis Pharmaceuticals, unrelated to the present work. Funding StatementWe would like to thank all the participants including in this study. We would like to thank BIOGEN and IONIS which funded the NCT04288128 study and INSERM, which sponsored the NCT04288128 study (to A. D.). This work was supported by the International Max Planck Research School for Intelligent Systems (IMPRS-IS) (to J.S.) and the Else Kroener-Fresenius-Stiftung Medical Scientist programme ClinbrAIn (to W.I.), as well as the Else Kroener-Fresenius Stiftung Clinician Scientist programme PRECISE.net (to M.S.). Work on this project was supported, in part, by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) No 441409627, as part of the PROSPAX consortium under the frame of EJP RD, the European Joint Programme on Rare Diseases, under the EJP RD COFUND-EJP 825575 (to M.S. and A.D.).Author DeclarationsI confirm all relevant ethical guidelines have been followed, and any necessary IRB and/or ethics committee approvals have been obtained.YesThe details of the IRB/oversight body that provided approval or exemption for the research described are given below:Ethics committee/IRB of Sorbonne universite and University Tuebingen, Germany gave ethical approval for this workI confirm that all necessary patient/participant consent has been obtained and the appropriate institutional forms have been archived, and that any patient/participant/sample identifiers included were not known to anyone (e.g., hospital staff, patients or participants themselves) outside the research group so cannot be used to identify individuals.YesI understand that all clinical trials and any other prospective interventional studies must be registered with an ICMJE-approved registry, such as ClinicalTrials.gov. I confirm that any such study reported in the manuscript has been registered and the trial registration ID is provided (note: if posting a prospective study registered retrospectively, please provide a statement in the trial ID field explaining why the study was not registered in advance).YesI have followed all appropriate research reporting guidelines, such as any relevant EQUATOR Network research reporting checklist(s) and other pertinent material, if applicable.YesData will be made available upon reasonable request. The authors confirm that the data supporting the findings of this study are available within the article. Raw data regarding human subjects (e.g. clinical data) are not shared freely to protect the privacy of the human subjects involved in this study; no consent for open sharing has been obtained.